Ever heard the words, “You’ll see it reflect in an hour—or tomorrow”? That delay exists because many integrations still run on batch and schedules; processes that move data at fixed intervals to synchronise data (or events) between the source and the display. When updates only occur on a schedule, that leaves time for data to go stale, processing windows get overloaded, and recovery takes longer when something fails. For businesses, that means slower decisions, inconsistent reports, and customers seeing outdated information. Event-Driven Architecture (EDA) solves this by replacing time-boxed batches with small, real-time events that move as changes happen, keeping systems, teams, and customers in sync.

Why Event-driven architecture matters:

- Faster data, fewer delays: events flow in seconds, not in nightly batches.

- Lower change risk: There is now a separation between the origin of data and the destination.

- Better resilience: Failed events can be easily rerun and are contained to the single event.

- Built-for-growth: Easier to add new destinations (dashboards, AI, alerting) for the data without rewriting the source.

EDA in practice (platform-agnostic, business-first)

An “event” is a fact (OrderCreated, ShipmentDispatched, JobCreated). Producers publish events once; many consumers subscribe and react in their own time. You can run EDA on multiple backbones:

- Boomi: Use Event Streams for Kafka-style topics, or standard Boomi connectors/listeners for event sources and sinks. Great when your integration team already lives in AtomSphere and wants managed streaming alongside flows and APIs.

- Azure Service Bus: A fully managed enterprise message broker with topics/subscriptions, sessions, FIFO ordering (with sessions), dead-lettering, scheduled delivery, and rich retry controls. Ideal in Microsoft-centric estates or when you want deep Azure governance/observability baked in.

- Azure Event Grid: Use Azure Event Grid to subscribe to BlobCreated events from Azure Storage and forward them directly to Azure Service Bus queue or topic (with retries, DLQ, and managed identity). Add advanced filters (container, path, suffix like “.csv”) so every relevant file drop becomes a small, reliable message for downstream consumers – no polling or batch windows.

Reality check: You don’t have to choose one forever. Many clients run hybrid: Service Bus for app-to-app messaging within Azure; Boomi Event Streams (or Boomi flows + external Kafka) for cross-platform, vendor-neutral streaming; Boomi processes to transform, enrich, and land data where it needs to go.

Where EDA pays off (quick business wins)

Some examples where Event-Driven Architecture delivers value of a range of business processes:

1. Water billing & customer experience – real-time billing updates

Now: A water utility runs billing on an overnight batch: files land in storage, a job posts charges to the billing system, and statements are generated hours later. Call centre staff and online portals are working off yesterday’s position; if the batch fails, there’s pressure to re-run jobs and explain incorrect balances to customers.

With EDA: Each file drop (e.g. meter reads or billing extracts) raises a BlobCreated event via Azure Event Grid, which fans into Service Bus for validation, enrichment, and bill generation steps. InvoiceGenerated and InvoiceIssued events are written back to the core system and data store as they happen, so portals and agents see up-to-date balances and documents within minutes, not after a nightly window.

2. Field service & work orders – Faster dispatch & closure

Now: Work orders for field crews (e.g. leaks, meter swaps, repairs) are created during the day but only synchronised to the mobile system on a schedule. Status updates and photos come back in batches when devices reconnect or at the end of the shift. Planners have little real-time view, SLAs are at risk, and customers can’t reliably be told when a worker will be or has been to site.

With EDA: WorkOrderCreated, WorkOrderAssigned, and TechnicianStatusUpdated events flow through a broker as they occur. The vendor sees new jobs instantly, status updates reflect immediately, and downstream systems (attachments, notes, customer comms) subscribe to the same event stream. The result is near real-time visibility from “job raised” through to “job completed” (with notes and photos) without waiting on sync windows.

3. System health & alerts – From mountains of log to live view

Now: In a financial institution, integration errors are buried in flat logs or emailed overnight as reports. Operations teams spend time doing “log archaeology” the next morning to understand what failed, which customers were affected, and whether any action is still required. By the time someone notices a pattern, it’s already impacted customers and SLAs.

With EDA: Every significant failure or degradation condition emits a BusinessErrorRaised orTechnicalErrorRaised onto a monitoring/alerting stream. These events feed a real-time dashboard and alerting platform, which aggregates by system, severity, and customer impact. Ops can see issues as they emerge, drill into the source of the data or stage of the integration flow, and track recovery via ErrorResolved events, rather than waiting for batch reports or manual checks.

4. Fleet & logistics – Accurate availability, less manual chasing

Now: A truck fleet management company updates vehicle status (available, on hire, in maintenance) and location via periodic batch jobs. The booking system only gets fresh data after the job runs, so vehicles appear available when they’re not, planners are on the phone frequently reconciling reality with the system, and utilisation reports are out of date.

With EDA: Vehicle telematics and operational systems publish VehicleStatusChanged and LocationUpdated events to a broker as they happen. A real-time API connected to the database consume these events and back the management website, planning tools, and reporting. Availability reflects current state; maintenance and utilisation are balanced better, and planners can focus on exceptions instead of fixing data after each batch run.

Design patterns to prevent common EDA failures

- Event Notification vs Event-Carried State Transfer: send a lightweight “something changed” or include the key fields consumers need; avoid forcing consumers to call back synchronously unless necessary.

- Idempotency & exactly-once (or close): design consumers to handle duplicates; use keys or hash checks to prevent redoing already process records.

- Ordering where it matters: use Azure Service Bus sessions for sequence-sensitive flows (e.g. payments, ledger).

- Dead-letter & retry policy: define when to retry, when to put aside, and how to reprocess.

- Schema governance: version your event contracts (JSON Schema), publish a catalogue and deprecate gracefully.

- Choreography with guard-rails: prefer choreographed events; introduce orchestration only where cross-domain consistency requires it (e.g., sagas with compensations).

Boomi + Azure Service Bus: how they fit together

EDA isn’t a one-size-fits-all model. The right backbone depends on your estate, governance model, and operational goals. Boomi and Azure Service Bus can complement each other well, and many clients use both as part of a connected integration strategy.

If an organisation already subscribes to much of the technical Microsoft product offerings, then leveraging that could reduce integration complexity and cost. If not, then some cost savings can be achieved by staying within the Boomi ecosystem.

Option A: Azure-first backbone

- Publish domain events to Service Bus topics.

- Use Boomi consumers to transform/enrich and fan out to SaaS/legacy systems (NetSuite, Salesforce, on-prem).

- Pros: Azure governance/monitoring, enterprise messaging semantics, encryption and RBAC with your existing Azure policies.

Option B: Boomi-first backbone

- Publish to Boomi Event Streams (Kafka-based) for high-throughput fan-out or guaranteed delivery.

- Use Boomi processes to route, transform, and persist; bridge to Service Bus where Azure apps need native semantics.

- Pros: single pane of glass for integration + streaming, strong fit for teams already invested in Boomi runtimes and connectors.

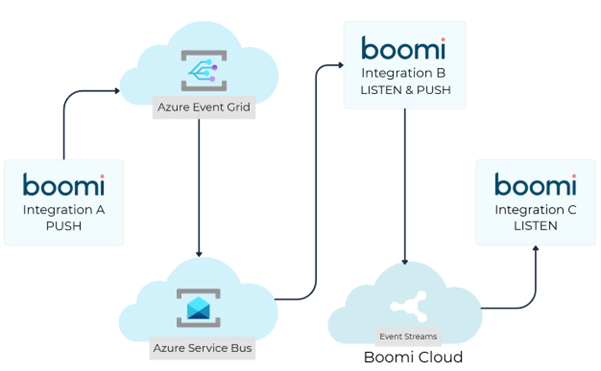

Option C: Hybrid

File drop events are routed via Azure Event Grid to an Azure Service Bus; a Boomi process listens to the queue and performs Extract, Transform, Load (ETL) and pushes the result to a selected topic in Event Streams for late upload to destination end-point – decoupling source and target while enabling file drop events.

Recommendations for how to migrate to event-driven architecture

Moving to EDA doesn’t need a big-bang rollout. A staged approach helps teams prove value early, stabilise patterns, and scale confidently. Start small with just one high-visibility batch and break it up across Service Bus topic or Boomi Event Streams with 2 – 3 event types (or just 1 if it is just one event). Then add some dashboards or alerts, while considering establishing a method for schema versioning and publishing – perhaps even an event catalogue. Then the final step is to sequentially repeat the process for another batch, whichever is the next highest visibility, while considering some automation or process for code promotion. That would also be a good time to start establishing a dead-letter queue management runbook and perform some capacity testing.

Common EDA pitfalls (and how to avoid them)

- Event sprawl: Too many processes handling the same type of events. Fix with a domain-driven catalogue and owner per event type.

- Silent failures: An event never finds its way to the destination but because the processing is fire-and-forget, nothing at the source is waiting for a response. Make use of dead-letter queues and retries, coupled with alerting on both the source and destination sides.

- Tight coupling: Transformations or validations that are specific to the destination appear at the start of the event processing rather than at the end. Keep events descriptive and not prescriptive by separating the source logic from the destination logic (i.e. don’t include logic about the destination when transforming the data at the start of the chain).

How you get low-risk, high-return EDA with Adaptiv

Event-Driven Architecture touches every part of the business from delivery and architecture to operations and customer experience. Adaptiv helps each group realise measurable value from the same change. For delivery leaders, it means faster releases and fewer cross-team hand-offs. For architects: cleaner boundaries, auditable contracts, and clear failure modes. For operations, it’s predictable runbooks, observable queues/topics, and steady incident reduction. For the business, fresher data, happier customers, and the ability to plug in new services without derailing the core.

Adopting EDA doesn’t need to be complex or risky. With Adaptiv, the transition to real-time integration is structured and transparent. Each step is designed to reduce uncertainty while keeping data flowing smoothly across your systems:

Discovery & value map: We identify batch pain points, pick the right backbone(s) (Azure Service Bus, Boomi Event Streams, or hybrid), and define event boundaries.

Landing zone & guardrails: Infrastructure-as-code provisioned topics/subscriptions/partitions, Role based access-control, network rules, retry/DLQ defaults, and naming/versioning standards.

Build the “thin slice”: Keep the initial scope small to one producer, two consumers, idempotency, ordering, dashboards, and a replay playbook.

Enablement: Hands-on sessions and a living event catalogue so your teams can extend the pattern safely.

Managed evolution: Ongoing optimisation – throughput tuning, schema evolution, and expansion to new domains (analytics, AI agents, partner events).

If your goal is to move from delayed updates to live, dependable data across your enterprise, a short discovery session with Adaptiv can help you identify the best place to start.